Messy Data IRL: Building Infrastructure For Tidy Data

by Isaac Flores-Huerta

What does it look like when a dozen data scientists and engineers wrangle thousands of moving data sources around millions of decision-makers and their networks? This is the first in a series of how the Ripple team is handling messy data at our scale; from rapidly identifying how to transform data to real-time anomaly detection of processed data to the final product. When we don’t find an existing solution that fits our needs, we leverage open-source projects as a foundation to build our own.

Ripple is BlueLabs’ proprietary platform that identifies the personal and professional networks of American decision-makers. Ripple’s rapid expansion from an R&D idea to product has brought about massive growth in the amount of data that we’re ingesting and managing as a team. This growth was also accompanied by the tremendous technical and organizational challenges that one would expect in terms of extending processes that need to scale reliably around data we could make no assumptions about. We looked to the traditional “Extract, Transform, Load” (ETL) world for help solving this:

1. Extract: The process where relevant data is pulled from raw data, different sources, or data sinks

2. Transform: Data gets processed and transformed into the correct and relevant shape that the company needs

3. Load: The output gets loaded into a database or data warehouse

As we searched for enterprise and open source solutions, we ultimately decided to create our own data pipeline system. This decision came naturally due to a couple of constraints. The product started as an exploration of what was feasible, and so we weren’t ready to commit to large and expensive enterprise solutions. Second, there are many moving parts, and we needed to plug in custom checks, custom tooling and have the entire infrastructure live within our existing ecosystem. We didn’t find the flexibility we wanted in the solutions we explored, so we built our own!

We encountered new challenges ingesting data and learned some hard truths and big lessons, a few of which we’ve boiled down for this series. Our work hit some repeating themes we will discuss in the series. These include:

Scale: In the beginning, we only had a handful of data points. A year later we have over a thousand moving pieces and more than 40 million decision-makers.

Heterogeneity: Our data comes from everywhere. We reconcile records across public and private datasets, database images, APIs, PDFs, hard drives, and the occasional CD. At one point we even received a paper copy of records!

Reliability: Data is a first-class citizen in our ecosystem. We’ve set up a system for catching and fixing data reliability issues as they arise to ensure a premium data experience.

Metadata: Data governance and data provenance are big these days, and our data presents some unique challenges in this respect. Our most recent and exciting foray on the data management side has been into using metadata to better understand how data flows through our systems.

Using open source solutions as our foundation, we built Maestro to address these challenges. Maestro is our internal toolkit that orchestrates almost everything that needs to happen on the Ripple team; from ingestion to delivery. Not everything can be automated, but Maestro helps the team identify when and where their judgments are needed.

Scale: Always a challenge when transitioning from minimum viable product to full-fledged product.

We won’t claim to have solved the problem of scale, but we have managed to create a human-centric framework to productively engage problems related to scale. One of the biggest scale-related challenges we encountered was figuring out the problem of orchestrating pipeline runs as well as team members across job functions.

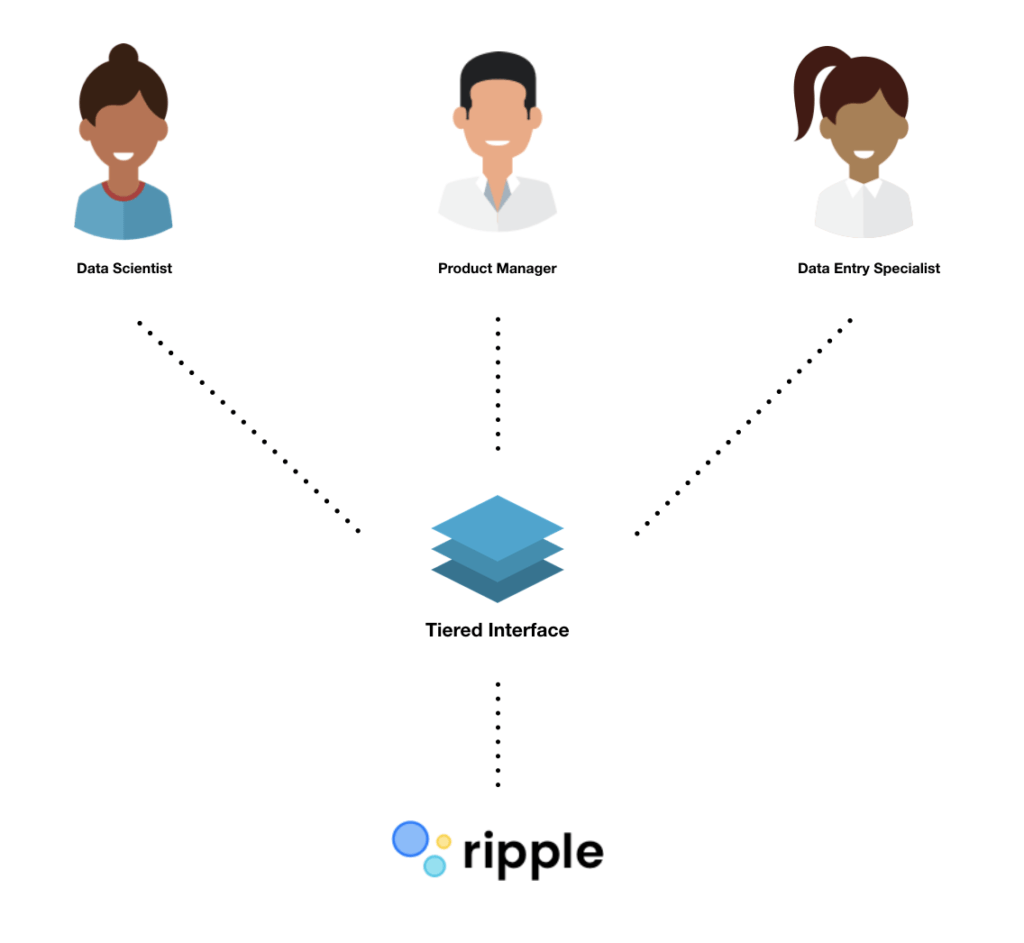

Since we’re a small team with different backgrounds – data entry specialists, product managers, engineering leads, data analysts – we wanted to be able to create pipeline processes that anyone could understand. With this in mind, we designed a three-tiered system to help us manage complexity at every level.

The first tier is the least flexible and revolves around configuration files that anyone can put together – it enables people to follow the rules. The Ripple data team identified key decision points for people to push data through and turned them into a simple-to-prepare configuration file composed of SQL abstractions. Ultimately, our system runs on SQL, the second tier, so anyone with knowledge and proper authentication can break the rules of the system and prepare custom SQL code should a data source require it. This makes it easy to prototype or develop promising extensions to our data model without much overhead. Finally, a data scientist or engineer can change the rules should they know Python. The end result is a pipeline of Python modules that generate and run SQL. The choice for our pipelines to revolve around SQL is simple – we’re a data science company! It’s in our DNA, from analyst to project manager, and it’s an approachable language for newcomers. This method allows every member of our team to:

Easily understand each process. Processes are small, modular, and self-explanatory to everyone working in the pipeline. Each process has a templated SQL portion that is understood by team members.

Process data of any scale. Whether it’s 100 million rows or 10 thousand rows, we don’t need to set up special EC2 instances with more memory or write memory efficient code because SQL and our database takes care of that.

Easily test results of the pipeline. Anyone can write arbitrarily complex business logic to test intermediate or final results. Although we log and track universal QC metrics, the nature of heterogeneous data requires custom rules to be written.

Anyone with a data background understands SQL. SQL allows for universal access, quick exploration, and introspection of the data in a way that other methods don’t. Plus, almost everyone at BlueLabs knows SQL, so anyone outside of the team can help at any point and time.

Heterogeneity: We can make no assumptions about the shape of our data

When searching for solutions, the heterogeneity of our data was one of our biggest impediments for using an out-of-the-box solution. In effect, we can make no assumptions about the shape of our data. We see data in flat formats, in graph formats, and in various levels of normal form.

Furthermore, at an atomic level, we have no guarantees about how our data will be formatted. For example, the distinction between name and title might seem simple enough, but in Georgia, we found over one hundred instances of the name “Princess” as a first name and not as a title. This is part of where the human-centric framework comes into play because people need to review the raw data that passes through the pipeline. We leverage automatic cleaning, but data is always pushing the bounds and our universe of automatically handled edge cases continues to expand. As the number of edge cases increases, we can more easily extend cleaning functions to manage additional edge cases and write test cases for future analysts.

When working with such heterogeneous data, it can be difficult to define a data model, but this process re-emphasized for us the importance of defining that model early. Without a data model, you risk becoming disoriented and introducing unnecessary challenges. After establishing a data model, one can start to make assumptions about it; these assumptions enable the ability to automate and test data using abstractions as it progresses through the pipeline. Our established data model allows us to get the most of the system we built. We built levers anyone can pull to fit our messy data into the shape that we ultimately want. Everyone on the team is trained to figure out how to deal with data in such a way that will fit into our existing data model. Getting data to the state that we want early on allows us to build out more fully automated processes downstream.

Maestro is how we are tackling the tough challenges regarding the scale and heterogeneity of data we process. We’re always improving our systems, and while not everything can be automated in the world of messy data, we’re making some headway! For now, messy data means a human still has to do some sort of manual process, and this will likely always be the case. However, that doesn’t mean we can’t scale outwards or strive for faster ingestion processes. On the Ripple team, we believe that everyone should have the foundational knowledge and necessary framework around data to help them be productive. By leveraging the simplicity and ubiquity of SQL, every team member is an expert on the pipeline process.

Come back soon for our second part of the miniseries where we’ll talk about how Maestro helps us deliver reliable data fast and efficiently! We’ll also touch upon the particularly thorny problem that is reliability and what it means for us as we continue to source data from all over the web.